Computational Physics Portfolio

Tip. Every highlighted link in this page is clickable. For fast navagation use Table of Contents

Nels Buhrley Physics Student — Brigham Young University–Idaho

Table of Contents

- About

- Academic Projects

- Personal Projects

- Selected Results

- The Fulton Supercomputer at BYU

- Quick Start

- Repository Structure

About

I created this repository as a collection of thirteen numerical simulation and computational mathematics projects developed during my physics coursework and independent study. The work spans classical mechanics, electrostatics, statistical mechanics, quantum mechanics and number theory — all implemented in C++17 with Python 3 visualization pipelines.

Core Competencies

| Area | Details |

|---|---|

| Languages | C++17, Python 3 |

| Numerical Methods | Runge-Kutta (RK4), Euler-Cromer, Störmer-Verlet, Monte Carlo (Metropolis), Finite Differences, Successive Over-Relaxation, FFT spectral analysis |

| Parallelism | OpenMP multi-threadin, manual thread allocation, and parrellel logic implimentation in tightly coupled simulations |

| High-Performance Computing | BYU Supercomputer — SLURM batch scheduling with up to 128 CPU cores for intensive simulations (Projects 4, 6, 7) |

| I/O & Visualization | CSV, NPZ (via cnpy/zlib), Matplotlib (3D surfaces, contour maps, animations, phase-space portraits), Plotly (interactive HTML plots) |

| Build Systems | GNU Make with multi-target builds (debug, release, profile-guided optimization) |

Academic Projects

| # | Project | Physical System | Numerical Method | OpenMP | HPC |

|---|---|---|---|---|---|

| 1 | Realistic Projectile Motion | 3D ballistics with drag, spin (Magnus), wind | 4th-order Runge-Kutta | — | — |

| 2 | Driven Damped Oscillations | Nonlinear pendulum → periodic & chaotic regimes | RK4 + Euler-Cromer; Poincaré sections | — | — |

| 3 | Celestial Dynamics | N-body gravitational orbits (full Solar System) | RK4, Euler-Cromer, Störmer-Verlet | — | — |

| 4 | Overrelaxation | 3D Laplace’s equation (electrostatics) | Red-Black SOR with optimal $\omega$ | ✓ | ✓ |

| 5 | Oscillations on a String | Damped stiff wave equation + spectral analysis | Finite differences (2nd + 4th order) + KissFFT | ✓ parallel spatial | — |

| 6 | Diffusion | 3D Brownian random-walk ensemble | Monte Carlo with reflective BCs | ✓ parallel particles | ✓ |

| 7 | The Ising Model | 3D ferromagnetic phase transition | Metropolis MCMC, checkerboard sweep | ✓ multifactor parallel | ✓ 128 CPUs |

| 8 | Molecular Dynamics | 2D Lennard-Jones fluid (400 particles) | Velocity Verlet, O(N²) pair forces | ✓ thread-local accumulators | ✓ 8 CPUs |

| 9 | Quantum Mechanics | 1D Schrödinger equation, symmetric polynomial potentials (even degrees: 2, 4, 6, 8, 10) | Numerov 4th-order integration, nodal bracketing, dual shooting/matching bisection | — | — |

Progression

The projects follow a deliberate arc of increasing computational sophistication:

- Projects 1–3 build fluency with ODE integration (RK4, symplectic methods) and interactive simulations

- Project 4 introduces PDE solving, iterative methods, and OpenMP parallelism

- Projects 5–6 combine PDE/stochastic methods with spectral analysis and 3D particle tracking

- Project 7 synthesizes everything: statistical physics, Monte Carlo methods, precomputed lookup tables, multi-dimensional parameter sweeps, and full HPC deployment

- Project 8 tackles the hardest parallelization challenge — an $O(N^2)$ N-body problem where Newton’s third law optimizations create race conditions, resolved via thread-local accumulators and guided scheduling

- Project 9 applies boundary value problem solvers to quantum mechanics: energy quantization through nodal counting, high-order Numerov schemes, and dual-method bisection convergence (shooting via forward integration from origin; matching via backward integration with origin-centric boundary matching)

Personal Projects

| # | Project | Domain | Key Challenge | Parallelism |

|---|---|---|---|---|

| 1 | Collatz Conjecture (3n+1) | Number theory | Exhaustive sequence analysis for $n \leq 10^6$; recursive max-value chaining | — |

| 2 | Euler’s Idoneal Numbers | Number theory | Sieve over triple loop $a < b < c$ up to $5 \times 10^7$; thread-safe monotonic writes | ✓ OpenMP dynamic |

| 3 | Monty Hall Problem | Probability & Statistics | Monte Carlo simulation to empirically verify counterintuitive theoretical predictions | — |

Selected Results

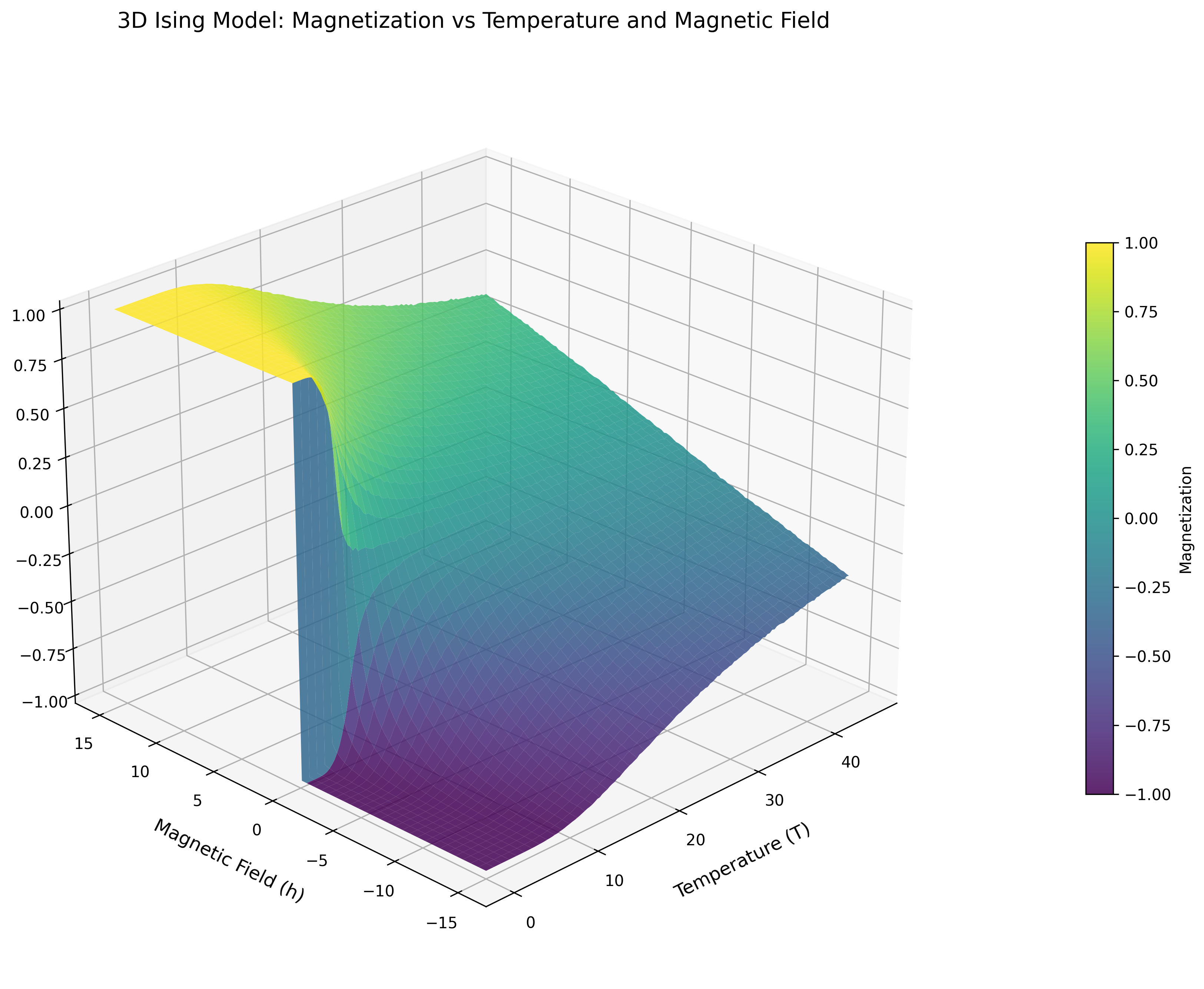

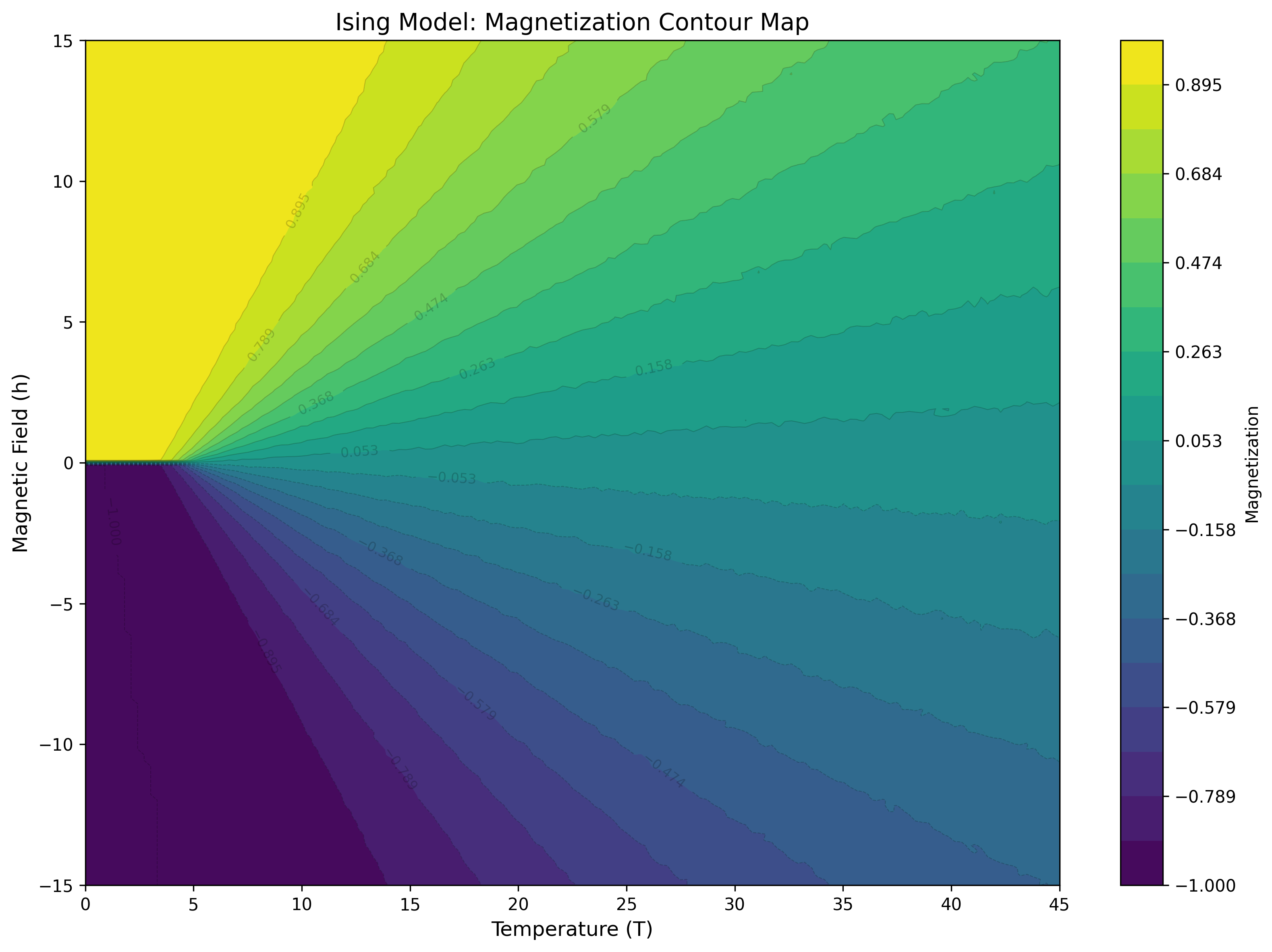

Project 7: The Ising Model

Magnetization surface and contour map of the 3D Ising model, revealing the ferromagnetic phase transition at $T_c \approx 4.51\,J/k_B$. Monte Carlo Metropolis algorithm with checkerboard sweep optimization across 2D temperature × magnetic field parameter space.

Project 8: Molecular Dynamics

Heating/cooling cycle simulation of 400 particles under Lennard-Jones potential. Particle trajectories show transition from ordered grid to gas-like disorder during heating, then re-ordering during cooling. A shock wave propagates down from the top and reflects back up after the particles expand to the container boundary.

Project 4: Overrelaxation (Electrostatics)

Interactive 3D visualization of electrostatic potential field solved via Successive Over-Relaxation (SOR) with optimal relaxation parameter $\omega \approx 1.84$. Navigate through z-axis slices to explore the full 3D solution space ($N=1000^3$ grid points).

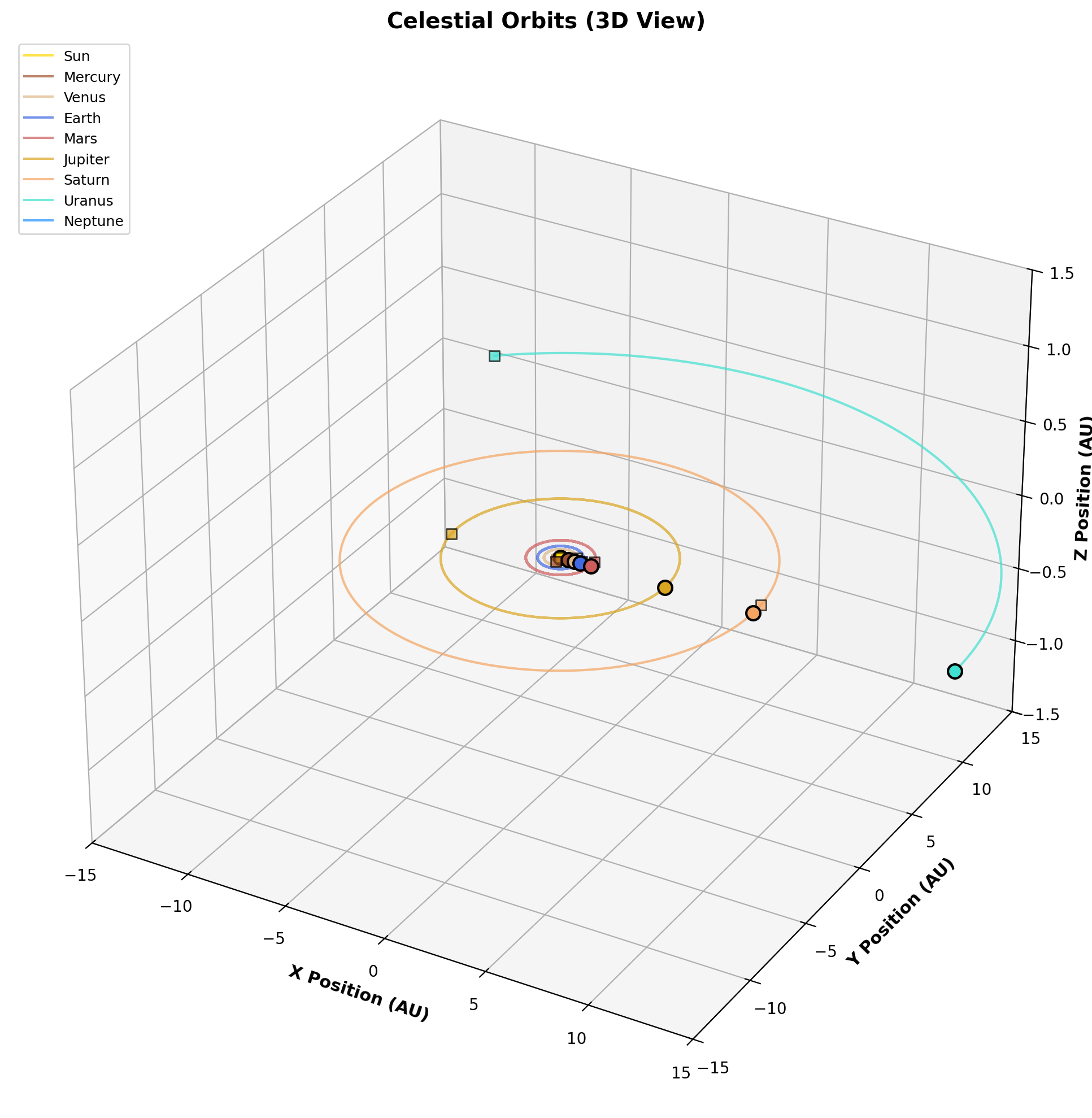

Project 3: Celestial Dynamics

videos/

N-body orbital dynamics of the Solar System computed with 4th-order Runge-Kutta integration. Left: Static plot of solar system. Right: Video of the solar system with the mass of Jupeter equil to about 0.94 Solar Masses.

Project 9: Quantum Mechanics

View Eigenstates Fullscreen ↗️

Interactive visualization of bound states for symmetric polynomial potential wells (even degrees: 2, 4, 6, 8, 10). Each tab displays eigenstates with 3D wavefunction plots overlaid with the potential well shape. Eigenstates computed via Numerov 4th-order integration, energy quantization by nodal counting, and dual-method bisection refinement (selectable shooting method with forward integration or matching method with origin-centric convergence) achieving high precision ($\Delta E < 10^{-8}$).

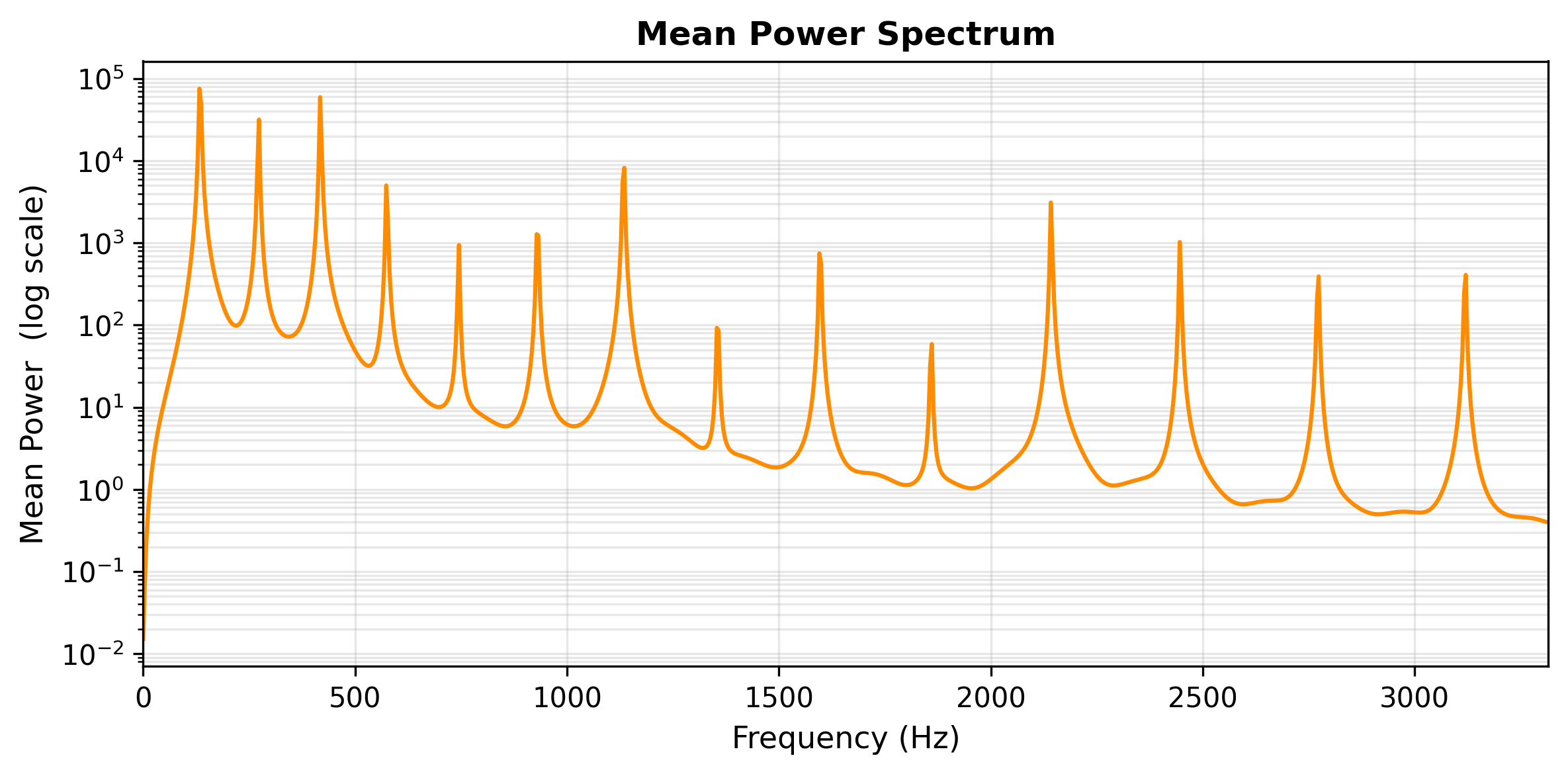

Project 5: Oscillations on a String

Mean power spectrum of transverse string oscillations showing discrete normal-mode peaks. FFT spectral analysis reveals harmonic structure and damping characteristics from finite-difference wave equation solver.

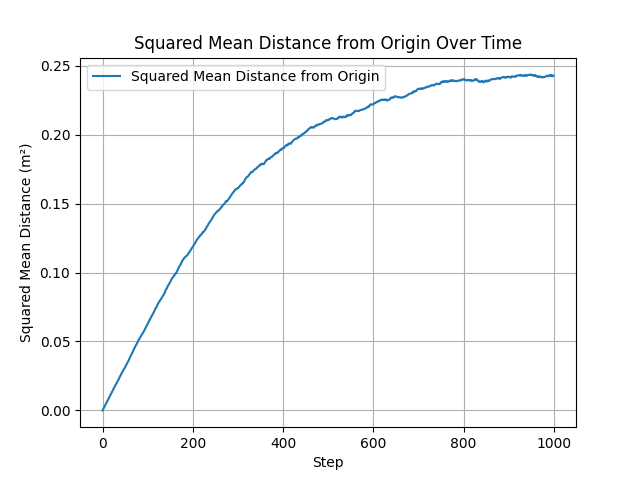

Project 6: Diffusion

Mean squared displacement from 3D random-walk Monte Carlo simulation, confirming Einstein’s diffusion law $\langle r^2 \rangle = 6Dt$. Ensemble of $10^6$ particle trajectories with reflective boundary conditions.

The Fulton Supercomputer at BYU

|

The Fulton Supercomputer (managed by the BYU Office of Research Computing) is a HPC cluster providing the processing backbone for my numerical modeling and simulation work. It manages over 35,000 CPU cores and 360+ GPUs, supported by a 6 PB parallel filesystem.

Architecture & Resources (2026 Specs):

|

Me at the BYU HPC cluster, February 2026 |

Workflow & Scheduling

Job Management

I utilize SLURM (Simple Linux Utility for Resource Management) to orchestrate simulations. This involves writing batch scripts that request specific hardware constraints to optimize performance, such as:

--constraint=avx512for vector instructions.--qos=standbyfor acess to unused priviate hardware.

Environment & Compilation

Development is performed on RHEL 9.4 login nodes using Remote - SSH and the linux terminal to manage my development and computational resources. I manage software dependencies via the module load system, typically involving:

- GCC/G++ for core simulation logic.

- OpenMPI for distributed memory parallelism.

- Python 3/ffmpeg for visiulation

Physics Implementation

On this cluster, I implement numerical solvers for complex potentials where the computation scales as $O(N^{2+})$. For a system of $N$ particles, the potential $V$ is calculated as:

\[V = \sum_{i < j} \frac{q_i q_j}{4\pi\epsilon_0 |\mathbf{r}_i - \mathbf{r}_j|}\]By utilizing OpenMP for multi-threading and MPI for node-to-node communication, I can distribute these calculations, significantly reducing the wall-time required for high-resolution datasets.

// Example: Basic OpenMP Parallelization for Force Calculation

#pragma omp parallel for reduction(+:total_energy)

for (int i = 0; i < N; ++i) {

for (int j = i + 1; j < N; ++j) {

total_energy += calculate_interaction(particles[i], particles[j]);

}

}

Simulation Deployment (This Portfolio):

| Project | Configuration | CPUs | Wall Time | Use Case |

|---|---|---|---|---|

| Project 4 (SOR) | CPU-only, #threads=16 |

16 | 30–45 min | Strong-scaling study of iterative PDE solver |

| Project 6 (Diffusion) | CPU-only, #threads=32 |

32 | 2-3 min | Parallelizing independent particle trajectories |

| Project 7 (Ising) | CPU-only, #threads=128 |

128 | 10–45 min | Large parameter-space sweep with checkerboard MCMC |

| Project 8 (Molecular Dynamics) | CPU-only, #threads=8 |

8 | 10 min–2 hrs | Thread-local force accumulation (race condition mitigation) |

Key Advantages for This Work:

- Scalability Testing: Weak and strong scaling studies for OpenMP efficiency (Projects 4, 7, 8)

- Parameter Sweeps: Multi-dimensional search spaces (Project 7: 2D temperature × field grid)

- Long-Running Simulations: Statistical ensembles (Project 6: millions of particle trajectories)

- Reproducibility: Identical hardware across multiple runs for benchmarking and verification

Quick Start

Every project follows the same workflow:

# 1. Navigate to a project

cd "Project 7: The Ising Model"

# 2. Build

make release

# 3. Run

./bin/main

# 4. Visualize

python3 plotting.py

Build Toolchain

| Tool | Version | Notes |

|---|---|---|

| Compiler | clang++ / g++ (C++17) |

Platform-detecting Makefiles |

| OpenMP | Homebrew libomp (macOS) / native (Linux) |

Projects 4–7 |

| zlib | System | For .npz output via cnpy |

| Python | 3.x | numpy, matplotlib, pandas |

| SLURM | BYU Supercomputer | Batch scheduling for HPC runs |

Prerequisites

# macOS

brew install libomp

pip3 install numpy matplotlib pandas

# Linux (e.g., BYU Supercomputer)

module load gcc python # or equivalent

pip3 install numpy matplotlib pandas

Repository Structure

CPP_Workspace/

|-- README.md # <- This file (Portfolio Hub)

|

|-- Project 1: realistic projectile motion/ # RK4 ballistics with drag & Magnus

|-- Project 2: driven damped oscillations/ # Nonlinear pendulum & chaos

|-- Project 3: Celestial Dynamics/ # N-body gravitational simulation

|-- Project 4: Overrelaxation/ # 3D Laplace solver (SOR + OpenMP)

|-- Project 5: Occilations on a string/ # Wave equation FD + FFT spectral

|-- Project 6: Diffusion/ # 3D random-walk Monte Carlo

|-- Project 7: The Ising Model/ # 3D Metropolis MCMC (HPC)

|

|-- Personal Project 1: 3n+1/ # Collatz conjecture exploration

|-- Personal Project 2: Idelic Numbers/ # Euler's idoneal number sieve

|

|-- include/ # Shared libraries

| |-- cnpy.h # NPZ I/O library

| `-- vector3d.h # 3D vector utilities

|-- src/

| `-- cnpy.cpp # cnpy implementation

|

|-- reference/ # Study notes & cheatsheets

| |-- CPP_BASICS.md

| |-- FUNCTIONS_REFERENCE.md

| |-- NUMERICAL_PHYSICS.md

| |-- OPTIMIZATION_TIPS.md

| `-- SLURM_FORMATING.md

|

`-- Template Makefile # Reusable build template

Nels Buhrley — BYU-Idaho Physics, 2025–2026